Extensive qualitative results show that the method can generate a much more diverse range of styles than SOTA comparisons. Extensive quantitative experiments support the idea the map is correct.

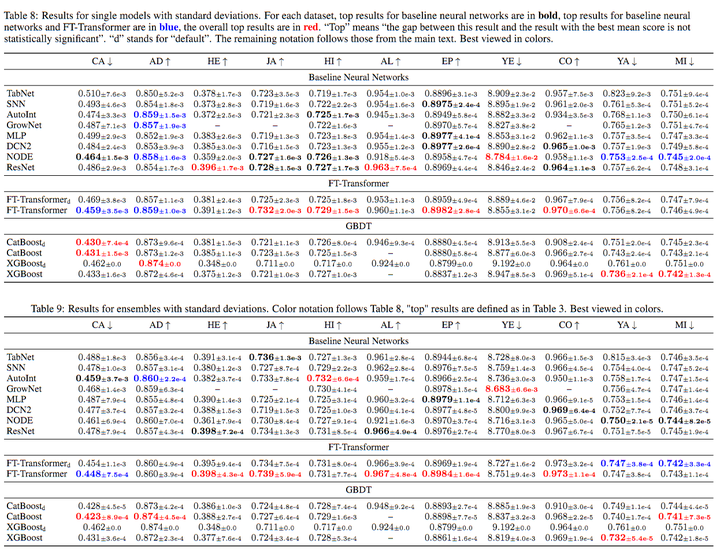

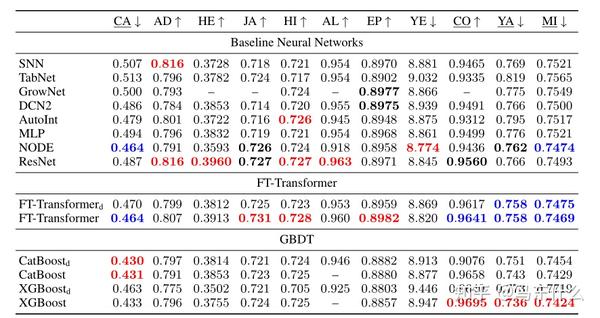

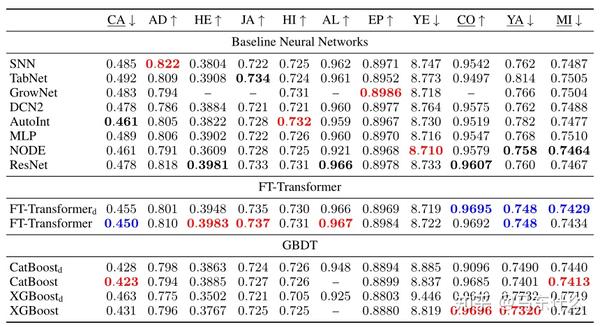

In contrast, current multimodal generation procedures cannot capture the complex styles that appear in anime. Under plausible assumptions, the map is not just diverse, but also correctly represents the probability of an anime, conditioned on an input face. This adversarial loss guarantees the map is diverse - a very wide range of anime can be produced from a single content code. An adversarial loss from our simple and effective definitions of style and content is derived. The research detailed in this paper shows how to learn a map that takes a content code, derived from a face image, and a randomly chosen style code to an anime image. GANs N’ Roses: Stable, Controllable, Diverse Image to Image Translation (works for videos too!) The GitHub repo associated with this paper can be found HERE. Finally, the authors design a simple adaptation of the Transformer architecture for tabular data that becomes a new strong DL baseline and reduces the gap between GBDT and DL models on datasets where GBDT dominates. Second, it’s demonstrated that a simple ResNet-like architecture is a surprisingly effective baseline, which outperforms most of the sophisticated models from the DL literature. First, it is shown that the choice between GBDT and DL models highly depends on data and there is still no universally superior solution. The authors carefully tune and evaluate them on a wide range of datasets and reveal two significant findings. This paper starts with a thorough review of the main families of DL models recently developed for tabular data. Moreover, the models are often not compared to each other, therefore, it is challenging to identify the best deep model for practitioners. However, since existing works often use different benchmarks and tuning protocols, it is unclear if the proposed models universally outperform GBDT. The recent literature on tabular DL proposes several deep architectures reported to be superior to traditional “shallow” models like Gradient Boosted Decision Trees.

The necessity of deep learning for tabular data is still an unanswered question addressed by a large number of research efforts. Revisiting Deep Learning Models for Tabular Data They generally contain a high degree of mathematics so be prepared. Consider that these are academic research papers, typically geared toward graduate students, post docs, and seasoned professionals. Especially relevant articles are marked with a “thumbs up” icon. Links to GitHub repos are provided when available. They are listed in no particular order with a link to each paper along with a brief overview. The articles listed below represent a small fraction of all articles appearing on the preprint server. arXiv contains a veritable treasure trove of statistical learning methods you may use one day in the solution of data science problems.

Researchers from all over the world contribute to this repository as a prelude to the peer review process for publication in traditional journals. In this recurring monthly feature, we filter recent research papers appearing on the preprint server for compelling subjects relating to AI, machine learning and deep learning – from disciplines including statistics, mathematics and computer science – and provide you with a useful “best of” list for the past month.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed